Disruption, Delay, and What Comes Next

In early 2025, something quietly unsettling happened in the job market; and then it started happening loudly.

U.S. employers announced roughly 1.2 million job cuts in 2025, with layoffs accelerating into the new year, particularly across technology, logistics, and corporate support functions (LinkedIn News, WSJ). By January alone, over 50,000 AI-related layoffs were announced by companies including Amazon, UPS, Dow, and Pinterest (Dave Fox on LinkedIn).

On paper, this still looks like a familiar story: post-pandemic over-hiring, interest-rate pressure, and companies “right-sizing.” But beneath those surface explanations, something more structural is unfolding.

At the World Economic Forum in Davos, Dario Amodei, CEO of Anthropic, delivered a stark warning; not in a sound bite, but in a 20,000-word essay aimed squarely at policymakers and business leaders. His message wasn’t that markets will wobble, but that the nature of work itself is approaching a volatile transition within the next 12–18 months (WSJ coverage, YouTube).

What makes this warning different from previous automation fears is not the pace alone; it’s the scope.

Amodei noted that AI systems are now writing the majority of Anthropic’s own production code, a capability that simply did not exist three years ago. From that trajectory, he predicts that up to 50% of entry-level white-collar roles could be eliminated or radically compressed within the next 1–5 years.

This is where the historical analogy breaks.

When agriculture automated, workers moved to factories. When factories automated, workers moved into services and knowledge work. Each disruption replaced tasks, but preserved a path forward for general human cognition.

This time, the thing being automated is cognition itself.

AI is no longer just replacing repetitive actions or narrow workflows. It is increasingly capable of performing general cognitive labor: drafting, synthesizing, reasoning, coding, planning, and coordinating across domains. The very skills that once allowed workers to pivot between industries; finance, consulting, law, engineering, marketing operations; are being hollowed out simultaneously.

That’s why this moment feels different.

Amodei described this phase as “technological adolescence”: a period where society gains immense power faster than it develops the governance, institutions, and cultural norms needed to manage it responsibly. In adolescence, capability outpaces judgment; and mistakes scale quickly.

The unease many workers feel today isn’t just about layoffs. It’s about the growing realization that there may not be an obvious “next ladder” to climb this time.

And that raises the question we’re only beginning to confront:

If AI keeps advancing at this pace; not just augmenting work, but absorbing it; what exactly happens to the job market next?

How Close Are We Really to Large-Scale Knowledge-Worker Displacement?

A lot of the current AI discourse assumes a near-instant collapse: massive displacement in 12–18 months, entire categories of knowledge work wiped out almost overnight. I don’t buy that timeline.

The capabilities clearly exist. We’ve all seen how quickly AI transformed software development, compressing workflows that once took weeks into hours. But capability is not the same thing as adoption, and history shows that large-scale process change moves far more slowly than technological breakthroughs.

Even in software; the most AI-ready industry; we don’t yet have dramatically better versions of the tools we rely on every day. There is no AI-native replacement for Microsoft Word or Google Docs that truly understands context, intent, and long-horizon work management. Task tracking platforms still behave like glorified to-do lists, despite years of hype around “intelligent work orchestration”

Outside of tech, the gap is even wider. Companies still employ large front-office teams for bookkeeping and customer support, even though AI-driven automation in accounting and call centers has been technically feasible for years. The constraint hasn’t been whether AI can do the work; it’s been trust, integration cost, regulatory friction, and organizational resistance.

The same pattern shows up in knowledge infrastructure. Most enterprise search still relies on keyword-based indexing rather than semantic or vector-based retrieval, despite the clear advantages of embedding-driven search systems (a point widely discussed in the context of modern RAG architectures). Libraries, internal knowledge bases, and government archives remain slow to modernize because replacing them requires rethinking governance, data ownership, and workflows; not just installing new software.

Government and regulated industries are the clearest counterexample to the “imminent collapse” narrative. Large portions of the public sector still run on legacy systems decades old; U.S. airlines famously rely on mainframe technology that predates the internet, largely because modernization risk outweighs efficiency gains. These systems persist not due to ignorance, but because institutional change is expensive, politically risky, and slow.

Finally, process change itself is lagging. In many industries, the time it takes for a manual workflow to move from Point A to Point B is measured in weeks or months; not because automation is impossible, but because accountability, compliance, and human sign-off are deeply embedded into how work gets done.

So while AI may be advancing at an exponential pace, the world it is entering is still profoundly analog, fragmented, and resistant to rapid transformation. That friction matters. And it’s why sweeping predictions about near-term mass unemployment among knowledge workers underestimate the drag imposed by real institutions, real incentives, and real humans.

Unless we go through a truly massive transformation; where people, processes, institutions, and governments are willing to change in fundamental ways; we are still very far from realizing AI’s full productivity benefits in everyday work. Most organizations today are experimenting at the edges, not reinventing how work actually gets done.

That’s why I believe we’re at least five years out from AI becoming disruptive at the scale many predictions suggest. The technology may be advancing quickly, but adoption, trust, governance, and organizational change move much more slowly.

AI Lowers the Barrier to Building, Not to Thinking

In the meantime, this transition period creates something we’re not talking about enough: new opportunity for knowledge workers, not just risk.

AI has quietly given us an unprecedented set of tools to build. As Jensen Huang puts it:

“In the future, programming will be done by describing what you want.”

“The computer will program itself with a great deal of guidance from us.”

“That means the barrier to creating software goes to zero.”

“And when the barrier goes to zero, everybody becomes a programmer.”

That doesn’t mean professional software engineers disappear. It means the hardest part of the work shifts:

- Understanding the problem

- Formulating the right questions

- Knowing what to test

- Knowing what good actually looks like

Those skills don’t vanish in an AI-enabled world; they become more valuable.

This is the window we’re in right now.

If you’re a knowledge worker, the opportunity isn’t to compete with AI on speed or output. It’s to use AI as leverage:

- Identify areas in your work or life that are slow, painful, or inefficient; and redesign them

- Look within your own domain or industry for problems that have been accepted as “just the way things are”

- Use AI to prototype, test, and iterate faster than was ever possible before

Every industry has unmet needs. Every domain has broken workflows. The tools to fix them are no longer locked behind elite technical barriers.

We’re not yet in a world where AI replaces everyone. We are in a world where the cost of building, experimenting, and inventing has collapsed.

That makes this moment less about fear; and more about imagination.

Possible Post-AI Futures

In the near term, AI mostly looks like productivity tooling: fewer hires, more output, tighter teams. But if capability keeps compounding (and if companies keep treating this primarily as a cost play), the medium-term effects start to look less like “automation” and more like a restructuring of who gets to earn income; and why.

So instead of arguing “AI will” vs “AI won’t,” it’s more useful to ask:

What’s the dominant path the economy takes if AI keeps improving; and policy, labor markets, and institutions respond at different speeds?

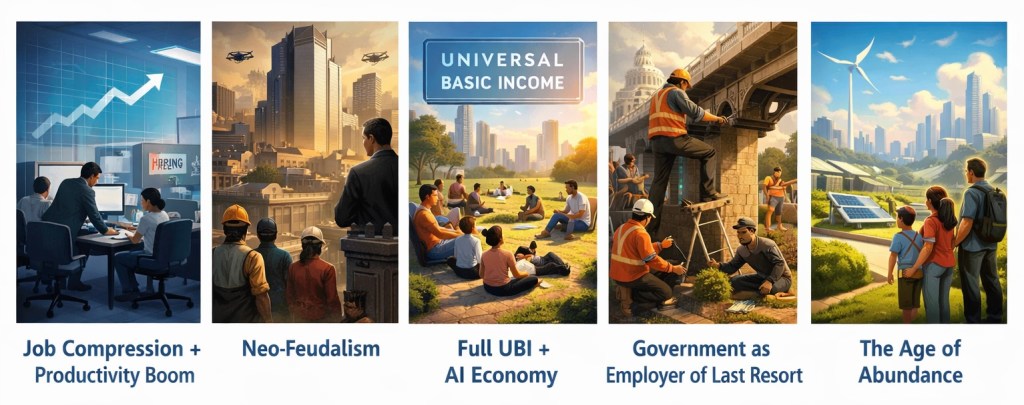

Here are five plausible trajectories. None of these are predictions. They’re maps; so you can recognize what’s happening as it starts to happen.

Scenario 1 – The Productivity Boom Nobody Feels

(Job Compression + Minimal Policy Change) – This is the “everything looks fine… until it doesn’t” path.

In this future, AI does exactly what economists once promised it would do: productivity explodes. Companies ship faster. Costs fall. Output rises. On paper, everything looks healthy.

The problem is that productivity gains no longer translate into more jobs.

Instead of creating new roles, AI compresses work. Teams shrink. Entry-level roles disappear first, followed by layers of coordination, analysis, and middle management. One highly skilled worker, augmented by AI, replaces five to ten people who used to do pieces of the same job.

Companies don’t feel cruel when they make these cuts; they feel efficient. From their perspective, nothing is “broken.” Shareholders are satisfied. Customers still get what they want. But the labor market quietly hollows out.

What emerges isn’t mass unemployment overnight. It’s something subtler and more corrosive: fewer rungs on the ladder. Careers become brittle. Wages stagnate even for people who still have jobs. Job searches take longer. People cling to roles not because they love them, but because there’s nowhere obvious to go next.

A day in the life

It’s Tuesday. Maya works at a fintech company and is technically “thriving.” Her team of four now does what a team of twenty did five years ago. AI drafts reports, summarizes meetings, and generates first-pass analyses. Maya’s job is to review, approve, and make judgment calls.

She logs off at 6 p.m., exhausted; not from workload, but from pressure. She knows there are no junior hires coming up behind her. No buffer. No redundancy. If she burns out or slips up, she’s replaceable; not by another person, but by a slightly better model.

This is a world where work still exists; but careers quietly stop making sense.

Scenario 2 – Neo-Feudalism, But With Better Apps

(Power Concentration Without Redistribution) – This is the “winner-take-most” path

In this version of the future, AI doesn’t eliminate work entirely; it concentrates power.

A small number of firms own the most capable models, the data pipelines, and the infrastructure required to run them at scale. These companies don’t just dominate markets; they dominate capability. Everyone else rents intelligence the way they once rented cloud computing.

Employment still exists, but it becomes increasingly precarious. Many people work as contractors, moderators, evaluators, or short-term specialists plugged into AI-driven systems they don’t control. Ownership matters more than labor. Equity matters more than skill.

Social mobility slows. Wealth compounds upward. The distance between people who own AI systems and people who serve them grows wider each year.

This isn’t feudalism in the medieval sense; there are no castles or lords; but the structure feels familiar. Protection and access flow downward; value and control flow upward.

A day in the life

Jared wakes up and checks three dashboards before breakfast. Each represents a different platform he works for; content validation for one, model fine-tuning feedback for another, compliance review for a third. None offer benefits. None offer long-term security.

His income fluctuates week to week. Algorithms decide which tasks he gets. Appeals are automated. Human support is rare.

Across town, a handful of executives at one of those platforms review quarterly earnings. AI has allowed them to scale with minimal headcount. Profits are strong. Labor costs are negligible.

Both Jared and the executives are “part of the AI economy.” Only one of them has leverage.

Scenario 3 – Universal Basic Income and the Identity Crisis

(Full UBI + AI-Driven Economy) – This is the “we stabilize demand” path.

Here, governments acknowledge the obvious: AI is generating enormous economic value while reducing the need for human labor. Instead of forcing the market to absorb the shock, they decouple survival from employment.

Every citizen receives a guaranteed income; enough to cover basic needs. Not luxury. Stability.

Economically, this works better than many expect. Consumption stabilizes. Extreme poverty declines. People are less desperate. Automation accelerates without triggering immediate social collapse.

The harder problem is psychological.

For generations, work wasn’t just how people earned money; it was how they earned identity. Status. Purpose. Belonging. When work becomes optional, society has to answer a question it’s never seriously confronted before: what is a good life when productivity is no longer required?

Some people flourish. Others flounder.

A day in the life

Elena wakes up without an alarm. Her basic income covers rent, food, and healthcare. She spends the morning volunteering at a local elder-care center, then takes an online course in ceramics; something she always wanted to explore but never had time for.

Her brother, meanwhile, feels untethered. He hasn’t lost money, but he’s lost direction. He scrolls job boards anyway, even though he doesn’t strictly need a job. What he wants is structure. Recognition. A reason to feel useful.

This future solves the problem of survival; but it forces society to confront the harder question of meaning.

Scenario 4 – Make-Work, but On Purpose (Government as Employer of Last Resort)

This is the “we keep people working on purpose” path.

In this future, society quietly admits something it’s been avoiding: the market alone is no longer capable of providing meaningful work for everyone who wants it. AI didn’t cause a sudden collapse; it caused a slow hollowing. Jobs didn’t disappear all at once; they simply stopped forming at the bottom.

Governments respond not by banning AI or mandating employment quotas in private companies, but by becoming the employer of last resort.

This isn’t the old image of useless bureaucracy. It’s framed as national resilience. People are hired into roles that are socially valuable but economically fragile: climate adaptation projects, elder care support, local infrastructure maintenance, education assistants, public data stewardship, community health outreach. AI is everywhere; but humans are kept “in the loop” by design.

Work still exists. Pay is modest. Prestige quietly changes shape.

The real shift isn’t economic; it’s psychological. Employment becomes less about competition and more about participation.

A day in the life

At 8:30 a.m., Maya logs into her municipal work portal. She used to be a marketing operations manager; now she’s a “Community Systems Coordinator.”

Her job today is to work with an AI planning tool that flags neighborhoods vulnerable to heat waves. She coordinates with local volunteers, checks sensor data, and schedules cooling-center staffing for the week. The AI does the forecasting. The city trusts her to decide what actually happens.

She clocks out at 4:30 p.m. The pay covers rent and groceries; not luxury. But the work feels undeniably real. When she walks past a cooling center later that evening and sees it full, she knows exactly why she was there that morning.

No one pretends this is the same as her old career. But no one pretends it’s fake, either.

The quiet tradeoff

This world stabilizes society; but subtly redefines ambition.

Private-sector roles still exist, but they’re fewer, more elite, and harder to access. Public work becomes the default for millions. Innovation continues, but risk-taking shifts upward, away from individuals and toward institutions.

The question this scenario leaves hanging isn’t “Will people work?”

It’s “Who gets to opt out of this system; and who doesn’t?”

Scenario 5 – The Age of Abundance (Deflation as the Pressure Valve)

This is the “AI makes everything cheap enough that the job problem softens” path

This future doesn’t solve the job problem directly; it dissolves it sideways.

AI becomes so good, so cheap, and so deeply embedded that the cost of many essentials collapses. Not everything, but enough. Housing construction accelerates. Energy prices fall. Food production becomes hyper-efficient. Basic services; education, logistics, healthcare diagnostics; are heavily subsidized by AI-driven productivity gains.

People still lose jobs. But losing income no longer carries the same existential threat.

Money stretches further. The safety net isn’t a monthly check; it’s the disappearance of scarcity in everyday life.

Work becomes optional for survival but necessary for status, meaning, and differentiation.

A day in the life

Jonah wakes up in a small but well-designed apartment. His rent is low; modular construction and automated building slashed costs years ago. Breakfast is cheap. Power is cheap. Transportation is nearly free.

He spends the morning helping an AI-assisted fabrication lab design furniture for a local community center. He isn’t paid much, but he doesn’t need much. In the afternoon, he takes an online course on urban ecology; mostly for interest, partly for credibility.

He works maybe 20 hours a week. Not because he’s lazy, but because there’s no financial reason to work more unless he wants to.

At night, he meets friends. The conversation isn’t about promotions anymore. It’s about projects. Experiments. Reputation. What you choose to do now that you don’t have to do anything.

The big caveat

Abundance reduces pressure; but doesn’t eliminate hierarchy.

Some people own the systems that create abundance. Others simply live inside it. Scarcity shifts from material goods to attention, influence, and control.

This world feels calmer. Less desperate. But it introduces a new tension: when survival is guaranteed, meaning becomes the scarce resource; and not everyone finds it.

What Jobs Remain Human? (For Now)

After all five scenarios, one question keeps resurfacing in different forms:

If AI can think, write, design, and plan; what’s left for people to do?

What skills do we really need if AI can outsmart us in every way? This is where I would lean on what Jensen Huang envisions as the most valuable skill:

“The smartest people are the ones who can reason about what should be built.” Someone who can decide what matters, not someone who just knows how to code.

His definition of SMART in the AI era is implicit but unmistakable:

Long term, my personal definition of smart is someone who sits at the intersection of being technically astute, but also having human empathy, and having the ability to infer the unspoken—the around-the-corners, the unknowables. People who are able to see around corners are truly smart, and their value is incredible.

To be able to preempt problems before they show up, just because you feel the vibe.

And that vibe comes from a combination of data, analysis, first principles, life experience, wisdom, sensing other people.

That vibe, that is what I think is smart. That is going to be the future definition of smart.

And that person might actually score horribly on the SAT.

What remains human are roles where judgment carries consequences.

Not simulated consequences. Not internal metrics. Real ones.

A surgeon deciding whether to proceed when the scan is ambiguous. A construction foreman halting a job because the ground doesn’t feel right. A senior leader choosing not to optimize because the system is already brittle. A therapist recognizing when silence matters more than insight. A regulator deciding when speed becomes danger.

In each case, the value isn’t intelligence. It’s accountability.

AI can recommend. It can simulate outcomes. It can even outperform humans statistically. But it cannot own a decision in a way society recognizes as legitimate; not yet, and maybe not ever.

The second category that remains stubbornly human is work anchored in physical reality.

Robots will arrive. They will improve. But the real world remains messy, irregular, and full of edge cases that defy clean abstraction. Skilled trades; construction, electrical work, repair, logistics; persist not because they are simple, but because they are situated. They require perception, improvisation, and adaptation to environments that change daily.

This is one reason the construction job boom exists alongside white-collar layoffs. AI eliminates coordination work faster than it eliminates physical scarcity.

Finally, there is work rooted in trust and relationship, not performance.

Caregiving. Teaching. Negotiation. Leadership. Mediation. These roles don’t disappear when AI improves; they become more important as systems grow more opaque. When decisions are harder to explain, people demand someone who can stand behind them.

The irony is that many of these roles were undervalued precisely because they didn’t scale.

Now, scalability is no longer the advantage it once was.

The Uncomfortable Truth

Every future above is a different answer to the same broken equation:

Work → Income → Survival

AI weakens the necessity of work; but not the human need for income, dignity, and purpose.

Taken together, these roles point to a quieter but more durable truth.

As AI absorbs more execution, optimization, and coordination, human value shifts away from output and toward responsibility. What remains human is not raw intelligence, speed, or scale. It is judgment under uncertainty. It is accountability when something goes wrong. It is a presence in the physical world. It is trust when systems become too complex to fully explain.

This does not mean humans become less useful. It means we stop being interchangeable.

In a world where machines can generate endless options, the scarce skill is deciding which ones should exist. In a world where systems can act autonomously, the real work is choosing when not to act. And in a world where productivity is no longer the bottleneck, meaning becomes something we actively design, not something we inherit from a job title.

That is not a grim future. It is a demanding one.

Humans still matter, not because we can outcompute machines, but because someone has to live with the consequences of what gets built. And that responsibility does not scale. It belongs, stubbornly and unavoidably, to people.

As Jensen Huang put it,

“The real scarcity is no longer intelligence. It’s judgment.”

Leave a comment