SaaS Is Dead?

The value of software is going down.

Not the utility of it — software still runs everything. But the economic value of building it, of owning it, of charging for access to it. That’s compressing in ways that should worry anyone running a SaaS company.

The big question: is SaaS dead?

I’ve been thinking about this for a while now, and the more I observe the landscape, the more I realize we’re witnessing something profound — not the death of software, but the death of software as a product.

For decades, we operated under a simple mental model: build something useful, charge people to access it. You pay monthly, you get the tool. The value exchange was clear.

But something has shifted. Software is starting to look more like a services-based business. Building software itself is no longer the hard part. Customers are surrounded by tools that look similar, behave similarly, and promise the same outcomes. What used to take a team of twenty engineers six months can now be prototyped in a weekend.

If anyone can build what you built, why should customers pay you?

In this world, charging for access no longer makes sense. What customers are actually paying for is how quickly and consistently value is delivered.

The product is no longer the thing. The relationship is the thing.

Where Does Value Live Now?

If software is easy to build, then the question is no longer: What features does your product have?

That question feels almost quaint now. I remember when feature lists mattered — when you could win a deal because you had that one integration the competitor didn’t. Those days are fading.

The question becomes something deeper: How can software companies provide real value? What is their moat? What does continuous value creation actually look like? And if you’re a new company — why would customers choose you and let you hold on to their data?

Data is intimate. When a customer gives you their data, they’re giving you trust. They’re betting you’ll still be here tomorrow, that you’ll protect what they’ve shared, that you’ll use it to make their lives better.

I’ve watched companies lose this trust in slow motion. Not through a single dramatic breach, but through a thousand small disappointments — features that never arrived, feedback that disappeared into a void, competitors who seemed to listen while the incumbent stopped trying. The customer didn’t leave because the software was bad. They left because the relationship felt one-directional.

This decision is no longer about static capabilities. It’s about trust, speed, and durability over time.

Traditional software moats — switching costs, network effects, data lock-in — are eroding. Cloud interoperability makes migrations easier. Open standards reduce data lock-in. Features can be replicated overnight. Defensibility has shifted from ownership to participation.

The new moats are different in character. They’re not about what you own — they’re about how deeply you participate in the customer’s world.

Workflow embedding creates dependency-driven defensibility. When your product is woven into a customer’s daily operations — integrated with their tools, shaping their processes, connected at every touchpoint — stickiness grows with every new integration, not just every new feature. The deeper you embed, the more painful extraction becomes — not because you’ve trapped the customer, but because you’ve made yourself genuinely useful at every layer.

Learning velocity compounds over time. Systems that improve with every interaction — where customer usage refines the product for everyone — build advantages that widen with scale. Data moats are no longer about hoarding information. They’re about how fast you learn from it and feed those learnings back into the product.

Trust and governance become competitive barriers as AI drives more sensitive decisions. Transparency, explainability, and auditability aren’t compliance checkboxes — they’re what separates the companies customers will bet their business on from the ones they won’t.

These moats reinforce each other. Deep workflow embedding generates rich data. Rich data accelerates learning. Demonstrated learning builds trust. Trust opens the door to deeper embedding. It’s a flywheel — and the company that spins it fastest wins.

The Real Moat: Time From Request to Delivery

Here’s what I’ve come to understand: the real value is the time it takes from when a customer requests a feature to when that feature is delivered to them.

In a crowded market where everyone can build similar software, the company that wins is the one that can repeatedly minimize the time between customer intent, product decision, and shipped functionality.

This is not about being fast once. Anyone can sprint. The moat is in being fast consistently — sustaining velocity without burning out, without cutting corners, without accumulating the kind of technical debt that eventually brings everything to a halt.

What separates the best teams isn’t talent or tooling alone. It’s something structural — the way information flows, how decisions get made, how few handoffs exist between a customer’s request and a developer’s hands. They’ve removed the friction most organizations treat as inevitable.

Value is created when this loop keeps getting shorter. The loop itself becomes the product.

The Organization That Enables Speed

So here’s how I envision an organization moving at unprecedented pace while still producing best-in-class products.

The organizations that succeed share a common trait: they’ve stripped away the layers between customer need and technical execution. The ones that fail keep adding process, adding approvals, adding meetings — trying to coordinate their way to speed, which is a contradiction in terms.

There are really only two roles that act as core drivers.

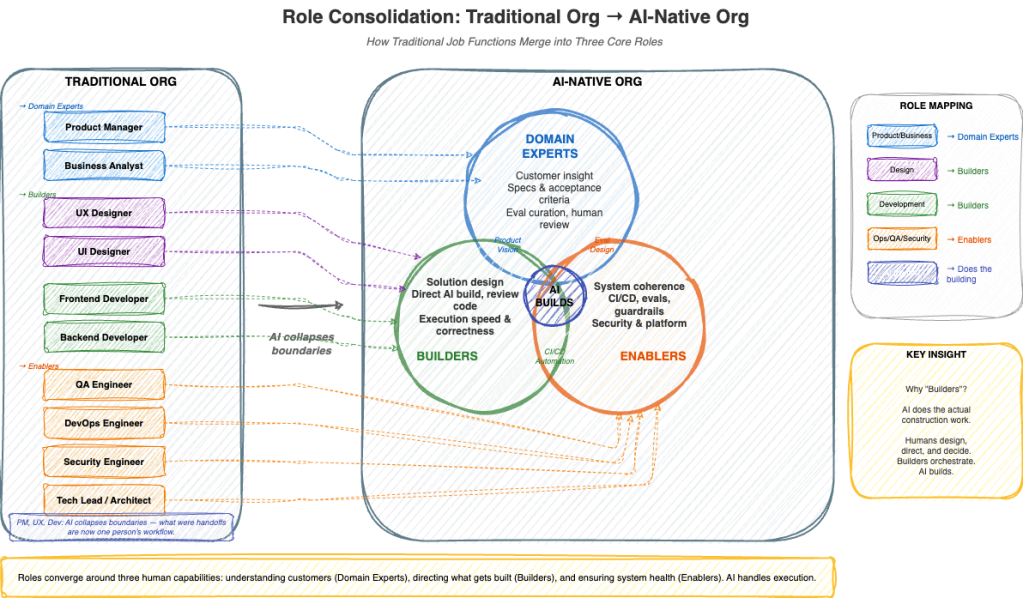

Builders are technical experts who take a well-defined product definition and build solutions using industry best practices. I’ve come to see builders not as code writers, but as translators. They take the messy, ambiguous language of human need and translate it into the precise language of systems. A great builder doesn’t just execute — they push back, ask clarifying questions, and sometimes propose solutions the customer never imagined.

Domain Experts understand customers deeply. They anticipate needs, understand where the market is heading, and articulate exactly what the customer wants in writing. They have to hold two contradictory things in their head simultaneously: deep empathy for the customer’s current pain, and clear vision of where the market is going. I’ve seen brilliant domain experts deprioritize a feature every customer was asking for because they saw a larger shift coming. Six months later, the market moved exactly where they predicted.

The entire process centers around enabling these two roles to work together — creating a continuous pipeline of requirements and delivery.

Enablers: The Third Role

There’s a third category: Enablers. Their job is ensuring the system scales, remains coherent, and continues to move fast without breaking.

I think of enablers as the people who tend the garden. Builders plant seeds. Domain experts decide what to grow. Enablers make sure the soil is healthy, the irrigation works, and the pests don’t take over.

The best enablers are nearly invisible when things go well. You notice them most by their absence — when CI pipelines break and nobody knows how to fix them, when a deployment fails at 2 AM and there’s no runbook. They’re not middle managers. They’re system stewards.

The AI-Powered Information Backbone

What connects these roles is not meetings and status updates — it’s an AI-enabled information infrastructure that ensures nothing gets lost in translation.

Every brainstorming session, every customer conversation, every design discussion is recorded and processed by AI note-taking tools that automatically capture key decisions, action items, and requirements. These notes are structured and circulated to the relevant builders without anyone having to manually write a summary or schedule a follow-up meeting to relay what was said.

Information sharing is unobstructed. There is no need to repeat the same discussion across different settings and people groups. When a domain expert captures a customer requirement, it flows directly into the issue tracking system — structured, prioritized, and contextualized. Builders never receive ambiguous requirements born from gaps in human communication. They receive clear, traceable artifacts that link back to the original customer conversation.

This isn’t about adding more tools. It’s about removing the friction that exists between human intent and human understanding. Most software projects fail not because the code is wrong, but because the requirements were misunderstood — because something was said in a meeting that didn’t make it into a ticket, or a nuance was lost when one person summarized another person’s words. AI eliminates these gaps by preserving the full context and routing it to exactly the people who need it.

The result is an organization where every thought about what needs to be built is streamlined and routed through proper tracking mechanisms. No ambiguity. No telephone game. No builder left wondering what the customer actually meant.

Job Functions Are Collapsing

Traditionally, you had clearly defined roles: a product manager, a UX designer, a developer. Three separate job functions. Three separate identities.

Now those three jobs are increasingly in competition to do the same work.

I saw this firsthand recently. A product manager used an AI coding tool to build a working prototype — complete with UI — in an afternoon. Previously, that would have required a design sprint, a developer, and at least two weeks. The PM did it between meetings.

AI and automation are collapsing the boundaries. What used to be handoffs are now internal loops inside a single person’s workflow. The value is no longer in owning a narrow slice. The value is in translating intent into execution, moving fluidly across design, product, and implementation.

As roles merge, the organization becomes flatter, faster, more execution-driven. We spent decades specializing, fragmenting, creating silos. Now we’re integrating, generalizing, breaking down walls. The pendulum swings.

How traditional job functions merge into three core roles — Domain Experts, Builders, and Enablers — with AI at the center doing the actual building.

How a Feature Actually Gets Built

Let me walk through what this looks like in practice.

A domain expert works closely with a customer, gathering requirements through conversations and deep understanding of pain points. Every interaction — whether a brainstorming session, a discovery call, or an informal discussion — is recorded and processed by AI note-taking tools that automatically capture decisions, requirements, and context. These notes are structured and circulated to the builders without anyone manually transcribing or summarizing. The domain expert’s job is to ensure the intent is captured faithfully, not to serve as a human relay.

This translation step is where most projects go wrong. The customer says “I need a dashboard.” What they mean is “I need to feel in control of something that currently feels chaotic.” The domain expert’s job is to hear both — the surface request and the deeper need. AI tools preserve the full nuance of these conversations so nothing is lost when the requirement reaches the builder.

The requirement flows through the issue tracking system automatically — structured, prioritized, and linked to the original conversation. Builders receive a clear artifact, not a secondhand summary. They can trace any requirement back to the customer’s own words.

Builders design a solution that best fits the use case. Good builders ask hard questions. “Why do we need this?” “Is there a simpler way?” These questions feel like resistance, but they’re actually the builders caring enough to make sure what gets built is worth building.

Builders articulate their design rationale — why this approach over alternatives, what tradeoffs were accepted, what constraints shaped the solution — and share it for review. This isn’t busywork documentation that nobody reads. It’s living design documentation that enables meaningful feedback and preserves institutional knowledge. Every design decision can be traced back to a deliberate choice, and builders are expected to defend those choices when challenged.

That design gets articulated in a way others can review. Enablers — technology experts with a vision for how the system needs to evolve — review and audit the system design at this stage. They’re not just checking for correctness. They’re ensuring the design aligns with the future architecture, that short-term decisions don’t create long-term constraints, and that builders are working toward a coherent technical vision. They stay plugged in throughout the process, providing direction and course-correcting before costly mistakes are baked into code.

Writing things down forces clarity — the document isn’t just communication, it’s thinking. Some of the best technical decisions I’ve seen came from writing a two-page design doc and realizing halfway through that the approach was wrong.

Notice what hasn’t happened yet: no code. The most expensive part of building software isn’t writing code — it’s writing the wrong code.

Building Is No Longer the Bottleneck

This is the sentence that felt almost heretical: building is no longer the hard part.

For my entire career, building was the bottleneck. We hired more engineers. We optimized our processes. We debated architectural patterns.

And now? I’ve watched a junior developer use an agentic coding tool to scaffold an entire microservice — models, routes, tests, deployment config — in under two hours. It would have taken a week the old way.

The tools have changed, but discipline hasn’t become optional. If anything, discipline matters more now. Builders use agentic coding tools at every step of the process — from scaffolding architectures to generating implementations to writing tests — but they continuously stay involved and audit every output. They don’t hand control to the AI and walk away. They pair with it, challenge it, and override it when it’s wrong.

The critical distinction: builders ultimately own the output. Every line of code, every architectural choice, every design tradeoff — a builder can explain why it exists and defend the decision. When you can generate code quickly, the risk isn’t that you build too little — it’s that you build too much, or that nobody understands what was built. Ownership means a builder has read, reviewed, and taken responsibility for every piece of AI-generated code in their domain.

Tests are not just quality assurance anymore. They’re specification. In a world where AI writes code, tests are how humans maintain control.

Domain Experts Close the Loop

Once something is built, domain experts plug back in to confirm that what was delivered matches what was envisioned.

This step sounds routine, but it’s where the most subtle problems surface. A feature can pass every test and still miss the mark. The data might be technically correct but presented in a way that confuses users. These are the things only a domain expert catches — the gap between “it works” and “it’s right.”

In agentic software, the same input can produce different outputs depending on model versions and prompt interactions. Domain experts are the last line of defense against drift — the slow, invisible degradation of quality that automated tests alone can’t catch.

The Agentic CI/CD Problem

Traditional CI/CD pipelines assume deterministic code. You write a function, it does the same thing every time. The entire infrastructure of modern software delivery is built on this assumption.

Agentic systems break it completely.

Every LLM call introduces variance. A prompt that works perfectly today might produce subtly different results after a model update. A chain of agent calls that handles 95% of cases might hallucinate on the other 5% in ways you can’t predict from test cases alone.

This requires a fundamentally different pipeline architecture — one where human judgment is not optional, but built into the system itself.

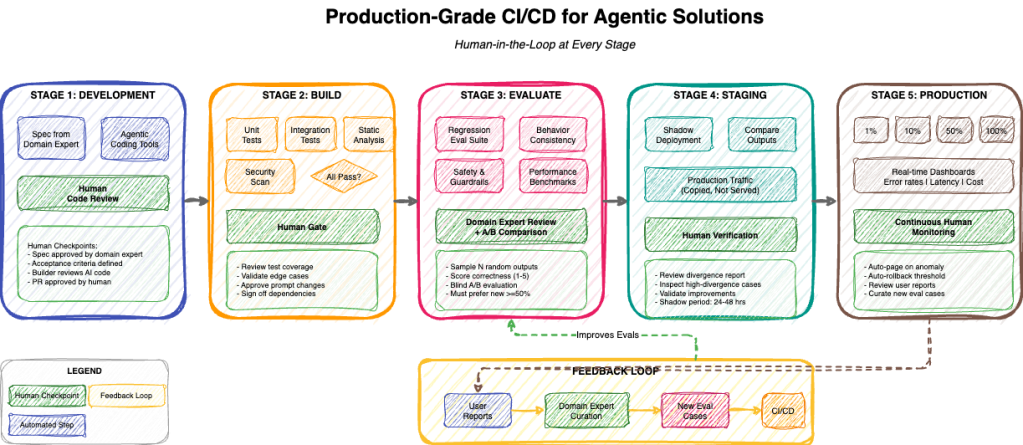

The complete pipeline with human checkpoints at every stage — from Development through Production, with a continuous feedback loop that improves evaluations over time.

Development is human-guided agent coding. Every line of AI-generated code gets reviewed by a human. This isn’t about distrust of AI — it’s about accountability.

Build runs automated verification with human gates. Prompt changes are treated like code changes — versioned and reviewed. In agentic software, changing three words in a system prompt can alter the behavior of an entire product.

Evaluation is where agentic CI/CD diverges completely from traditional pipelines. Domain experts catch “technically correct but wrong” outputs — the kind where the AI gives a perfectly logical answer that misses what the user was really asking.

Staging runs new versions in shadow mode — processing the same inputs as production but not serving outputs. You need to see how the system handles real traffic, real edge cases, real user behaviors.

Production uses canary rollouts with continuous human monitoring. Every production issue becomes a test case that prevents the same problem from happening again. The system learns from its mistakes, but only because humans are curating those lessons.

Why Every Stage Needs Human Input

Traditional software is like a train on tracks — it goes exactly where the tracks lead, every time. Agentic software is more like a car with GPS navigation. It usually gets you where you’re going, but it might take a different route each time, occasionally suggest a turn that makes no sense, and sometimes confidently drive you to the wrong address entirely.

Without human checkpoints, small errors compound into major failures. With human checkpoints, the system improves with every deployment.

The goal isn’t to slow down the pipeline. Speed comes from trust, not from skipping steps. Paradoxically, the teams I’ve seen move fastest are the ones with the most rigorous human review processes.

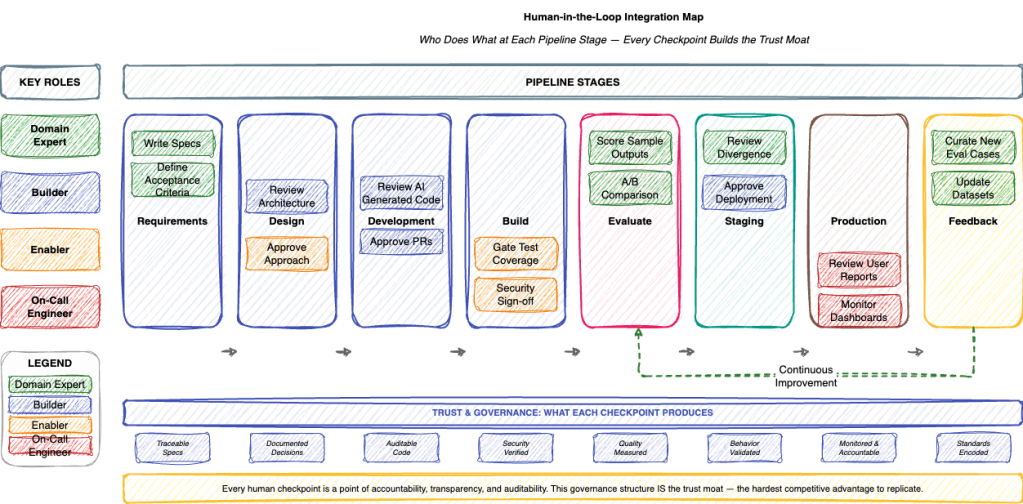

Each role — Domain Expert, Builder, Enabler, and On-Call Engineer — has specific touchpoints across the pipeline, ensuring human judgment is embedded at every critical decision point.

| Stage | Human Role | Who |

|---|---|---|

| Requirements | Write specs, define acceptance criteria | Domain Expert |

| Design | Review architecture, approve approach | Builder + Enabler |

| Development | Review AI-generated code, approve PRs | Builder |

| Build | Gate on test coverage, security sign-off | Enabler |

| Evaluate | Score sample outputs, A/B comparison | Domain Expert |

| Staging | Review divergence, approve deployment | Domain Expert + Builder |

| Production | Monitor dashboards, review user reports | On-call Engineer |

| Feedback | Curate new eval cases, update datasets | Domain Expert |

Speed Through Automation, Quality Through Expertise

The sophistication of this pipeline serves two goals simultaneously: maximizing quality and minimizing the time from concept to customer.

When the pipeline works well, prototypes emerge fast enough that customers can see what they’re getting early — before significant investment locks the team into a direction. Early feedback is cheaper feedback. A prototype that takes a week instead of a quarter means the customer can course-correct before the cost of change becomes prohibitive. This is where the moat lives: not in building faster, but in learning faster.

Time to production shrinks because automation handles the repetitive work at every stage — running evals, managing deployments, monitoring for regressions — while domain experts are plugged in at the right moments to validate outputs early. You don’t wait until the end to discover the product missed the mark. Experts are validating continuously, at every stage where their judgment matters.

Teams are equipped with tools to visualize and improve prompts, inspect model inputs and outputs, and build feedback loops that continuously refine the product. Without this observability, agentic systems degrade silently — prompts drift, model updates introduce subtle behavioral changes, and quality erodes in ways that automated tests alone can’t catch. The tools make the invisible visible, giving teams the ability to see exactly where the system is underperforming and why.

These feedback loops are not optional. They’re the mechanism by which product performance improves over time rather than degrading. Every production output feeds back into the evaluation dataset. Every customer interaction sharpens the prompts. Every edge case caught becomes a test case that prevents regression. The system doesn’t just maintain quality — it compounds it.

Organizations that move fastest also invest in giving their teams access to the best available AI models and tools to experiment with and build on. Builders and domain experts receive guidance on best practices — not rigid mandates, but informed recommendations on what works, what doesn’t, and what’s emerging. The goal is an environment where trying a new approach is easier than defending the status quo, where experimentation is the default and the best ideas propagate quickly across teams.

Building Quality: Critique Shadowing and the LLM Judge

Most teams building agentic systems want to automate quality assessment as quickly as possible. They build an LLM judge, point it at their outputs, and declare victory. But the judge is only as good as the standards it was trained on — and those standards come from humans sitting down, looking at actual outputs, and making hard calls.

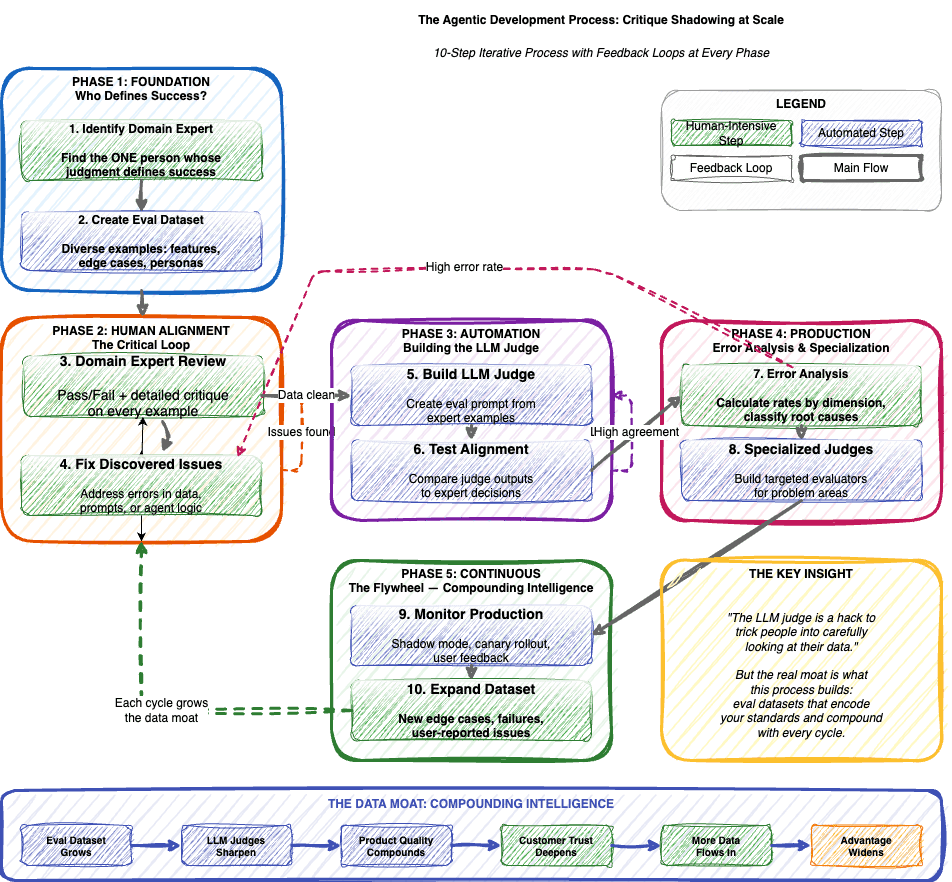

A 10-step iterative process with feedback loops at every phase. Green boxes indicate human-intensive steps. The arrows looping back represent continuous refinement.

It starts with identifying one domain expert — a single individual whose judgment is crucial. Not a committee. Committees diffuse responsibility. A single expert creates a clear standard everyone can align to.

Then you build an eval dataset representative of production traffic, not just happy paths. Most teams dramatically underestimate how weird real users are. The inputs you think are edge cases turn out to be Tuesday afternoon for your power users.

The domain expert reviews every example with binary pass/fail decisions. No ambiguous 3/5 scores. Detailed critiques explaining why something fails. I’ve watched domain experts go through this process and discover they didn’t agree with their own initial standards once they saw enough examples. The act of reviewing forces clarity.

Every failure reveals something: data issues, prompt issues, agent logic issues. You fix them and return to review. The loop continues until behavior is acceptable.

Once you have human-validated examples, you build an evaluation prompt using them, then test alignment — aim for over 90% agreement with the expert’s decisions. Build specialized judges for problem areas. Production reveals what synthetic data cannot. The loop never ends — it becomes a flywheel.

The LLM judge is a hack to trick people into carefully looking at their data. But it’s a brilliant hack. Without this process, “quality” is a feeling. With it, quality is a number you can track, debate, and improve.

Where This Is Heading

The pipeline I’ve described is designed for today — where human judgment is essential at every stage. But this isn’t the end state.

The question isn’t if automation will expand, but which functions get automated first, and what remains irreducibly human.

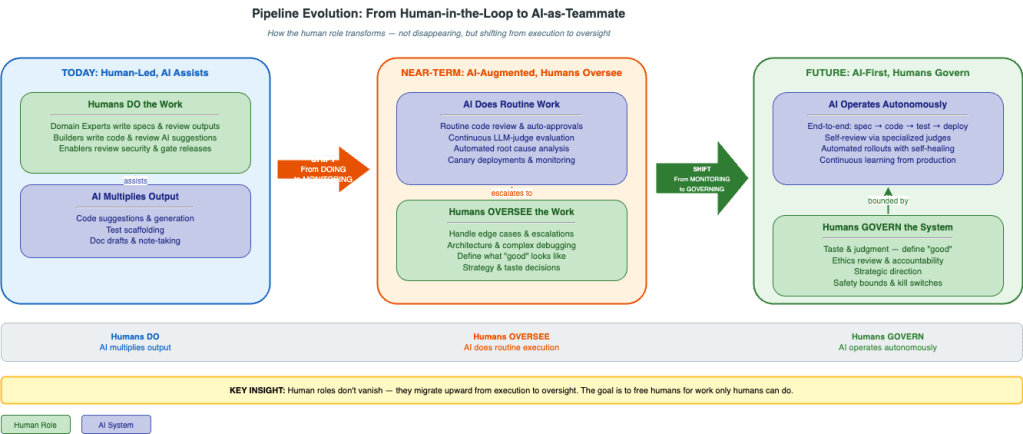

I think about this in three horizons, and what strikes me most is how the human role transforms at each stage — not disappearing, but fundamentally changing in character.

The evolution from human-led to AI-first. Green boxes are human roles, purple boxes are AI systems, orange boxes are governance. Notice how the green boxes don’t vanish — they migrate upward from execution to oversight.

Today: Human-Led, AI Assists

This is where most teams are now. Domain experts write specs and review outputs. Builders write code and review AI suggestions. Enablers review security and gate releases. AI tools are productivity multipliers, but humans make every meaningful decision.

This is a reasonable place to be. The tooling is powerful but not yet trustworthy enough to operate unsupervised. What’s important at this stage is building the muscle — the eval datasets, the review habits, the feedback loops — that will make later stages possible. Teams that skip this foundation and jump straight to automation end up with systems they don’t understand and can’t debug.

Near-Term: AI-Augmented, Humans Handle Exceptions

As AI agents become more capable, routine work shifts to automation. AI agents review standard PRs, flag issues, auto-approve low-risk changes. LLM judges run continuously, only escalating edge cases. AI performs root cause analysis, humans engage only for novel failures.

This is where things start to feel genuinely different. The volume of work an AI-augmented team can handle is an order of magnitude larger. But the human role changes — from doing the work to monitoring the work, from being in the loop to being on the loop.

I think this transition will be psychologically harder than most people expect. There’s a deep satisfaction in doing the work yourself, and it takes a different kind of skill to supervise well. Not everyone who was excellent at doing will be excellent at overseeing. The domain expert’s value shifts from their ability to do the work to their ability to define what good work looks like and recognize it when they see it. Builders spend less time writing code and more time designing the scaffolding that AI fills in — writing the tests, defining the interfaces, establishing the patterns.

Future: AI-First, Humans Provide Oversight

Eventually — and I think this is coming faster than most people expect — we get to AI-first with humans providing oversight. The AI team operates autonomously within human-defined bounds. End-to-end feature development. Self-review using specialized judges. Automated rollouts with self-healing.

This sounds like science fiction, but pieces of it are already working. I’ve seen teams where AI handles the entire cycle from spec to PR, with a human spending ten minutes reviewing what used to take days.

Even in this world, some things remain irreducibly human.

Taste and judgment — defining what “good” means. You can train an AI to evaluate against criteria, but someone has to define the criteria.

Ethics review — making sure you’re optimizing for the right metric. I’ve seen systems that were technically brilliant but ethically questionable, and no automated test would have caught it because the problem wasn’t in the code — it was in the intent.

Strategic direction — the hardest question isn’t “how do we build this?” but “should we build this at all?”

Accountability — when systems fail, there needs to be a human who can explain what happened and make sure it doesn’t happen again. You can’t assign accountability to an algorithm.

The goal isn’t to eliminate humans. The goal is to free humans for the work that only humans can do.

The Automation Progression

Not all functions automate at the same rate.

Now: code generation, unit tests, CI/CD pipelines, anomaly detection. Well-defined, repetitive tasks. Machines are already better at them than most humans.

Near – Term: routine code review, integration tests, canary analysis, root cause analysis. AI is getting good enough that human review of routine work becomes a rubber stamp — which means it’s ready to be automated.

Future: requirements gathering, edge case identification, eval curation, incident response. These feel deeply human today, but they’re more pattern-based than we’d like to admit.

Stays human: architecture decisions, ethics review, strategic direction, novel problem solving. They require reasoning about things that don’t exist yet, making tradeoffs between values that can’t be quantified.

The pattern is clear. Functions that are well-defined and repetitive automate first. Functions requiring taste, judgment, and creativity remain human longest.

What This Means for Organizations

Having engineers isn’t the moat — everyone has access to AI coding tools. The moat lives in three places.

First, workflow embedding — how deeply your development process is woven into the customer’s operations. When your feedback loops, your deployment pipeline, and your domain expertise are integrated into the customer’s world, switching costs aren’t about data migration. They’re about losing a partner who understands the customer’s business at every level — a partner whose AI-powered information infrastructure has captured every conversation, every requirement, every nuance of what the customer needs.

Second, learning velocity — how fast you learn from production. The eval datasets that encode your standards, the feedback loops that refine your models, the institutional knowledge captured in every design document and review cycle. These compound. Every deployment makes the next one better. Every customer interaction sharpens the product for all customers. The organization that learns fastest builds an advantage that widens with every iteration.

Third, trust — earned through transparency, accountability, and consistent delivery. When customers can see how decisions are made, when they know a human reviewed every critical output, when they experience a team that delivers what it promises on a timeline that respects their urgency — that trust becomes the hardest moat to replicate. Trust isn’t a feature. It’s the cumulative result of a thousand small acts of competence and care.

Efficiency gains compound. AI agents work 24/7 without fatigue. Feedback loops shrink from days to minutes. The cost of iteration approaches zero. The bottleneck becomes human taste and strategic clarity — which, if you think about it, is exactly where the bottleneck should be.

The companies that thrive won’t be the ones with the most engineers. They’ll be the ones with the clearest vision of what they’re building, the most disciplined systems for evaluating whether they’ve built it well, and the deepest integration into the customers they serve.

So Is SaaS Dead?

If SaaS is dead, what replaces it isn’t “no software.”

What replaces it is software that earns its value continuously.

The winners will be companies that minimize time from request to delivery. That embed themselves so deeply in the customer’s workflow that the relationship becomes the product. That use AI to maximize efficiency at every step — from capturing requirements to shipping code — while humans stay in control of every decision that matters. That build learning systems which compound value over time instead of selling access to static features.

The companies that figure this out will have a meaningful advantage. The ones that don’t will wonder where their moat went.

Leave a comment