“I would rather have questions that can’t be answered than answers that can’t be questioned.” — Richard Feynman

I was drinking coffee on Sunday morning when the anchor said it plainly: hundreds of brand new, AI-guided kamikaze drones deployed, in active combat, right now.

That line landed differently than I expected. Not because it shocked me — I’ve spent enough time building and working inside AI systems to know they don’t stay in productivity tools for long. But because it crystallized something I’d been circling for weeks. AI didn’t enter modern warfare this year. It upgraded it. Same conflicts, same geopolitical fault lines, same ancient human instinct to destroy what you can’t control — but the software layer running underneath all of it has been completely replaced.

That’s the thing about an OS upgrade. The hardware doesn’t change. The applications look the same on the surface. But everything executes differently — faster, at a different scale, with different failure modes that no one fully mapped before deployment. That’s the shift I want to understand. Not whether it’s good or bad. Just: what exactly changed, how fast, and where is the trajectory pointing from here?

From Analysts to Algorithms: The Intelligence Layer Gets Rebuilt

Start with how AI actually enters the battlefield — not in the dramatic Terminator sense, but in the quiet, infrastructural way that actually changes things.

For decades, military intelligence was fundamentally a human scaling problem. Thousands of analysts sifting through intercepted calls, satellite imagery, surveillance feeds — looking for signal in a vast, noisy dataset. The bottleneck was always human throughput. You could only process as much information as your analysts had hours in the day.

The shift started before this conflict. Israeli intelligence reportedly used AI to parse traffic camera feeds and intercept communications across Iran at volumes no human division could work through in time. According to the Hard Fork (NYT) podcast, March 2026, that’s what AI does in conflict today: “risk reduction at scale.” You’re not replacing the analyst. You’re multiplying what any analyst can see — and compressing the time from raw data to actionable intelligence from days to seconds.

In Operation Epic Fury — the US-led strikes against Iran beginning in early March 2026 — that compression became visible at scale. AI tools helped American forces identify and hit more than 5,500 targets inside Iran, according to Admiral Brad Cooper, head of US Central Command (DefenseScoop, March 2026). The backbone is Palantir’s Maven Smart System, now operating alongside Anthropic’s Claude under a $200M DoD contract active since 2024. On day one of operations alone, Claude generated approximately 1,000 prioritised targets — synthesising satellite imagery, signals intelligence, and surveillance feeds in real time, outputting GPS coordinates, weapons recommendations, and automated legal justifications for each strike (CBS News, March 2026).

“Maven uses AI algorithms to identify potential targets from satellite and other intelligence data, and Claude helps military planners sort the information and decide on targets and priorities.” — CBS News, March 2026

Think of it like a Bloomberg terminal for targeting. Data flows in from every sensor layer simultaneously. The model identifies patterns, flags anomalies, cross-references against known military installations, and surfaces recommendations faster than any human team could. A human then reviews the output and makes the call.

The interesting design question here isn’t whether the AI is accurate. It’s what happens to human decision-making when the machine produces a thousand recommendations before lunch. Independent testing has already flagged a 12.3% AI misidentification rate in complex urban scenarios — including a case where AI image recognition “mistakenly identified a reflective puddle as a missile launcher during testing” (Japan Times, March 9, 2026). Researchers have a name for what happens when humans review AI output at volume and velocity: automation bias — operators tend to rubber-stamp recommendations rather than genuinely interrogating each one. The speed of the system creates institutional pressure to approve and move forward. The AI doesn’t take over the decision. It reshapes the conditions under which humans decide.

Senator Mark Warner, top Democrat on the Senate Intelligence Committee, said in March 2026 he had “unanswered questions about how the technology is being used” — and noted there is no existing congressional oversight framework for AI-assisted targeting. The military deployed the system. The lawmakers found out from the news.

The New Economics of Destruction: Kamikaze Drones and Swarm Warfare

The intelligence layer is one piece. The weapons layer is where the technical picture gets genuinely fascinating.

That Sunday morning broadcast put it plainly: the conflict with Iran is running on a new generation of AI-guided kamikaze drones — one-way attack vehicles that don’t return. Dr. Rebecca Grant, VP of the Lexington Institute, described the cost structure on the March 15 Fox News Podcast: “You’d want to compare them more to the costing of artillery rounds more than you would to fighters.” Not multi-million-dollar aircraft. Expendable units, priced and deployed more like ammunition. The economic asymmetry is significant — you’re doing the job of a fighter jet at the unit cost of a shell.

That shift changes the logic of warfare in structural ways. One-way drones can be configured for two different effects: kinetic blast, or electromagnetic jamming. The same swarm can destroy physical infrastructure or disable electronics — and you choose the payload based on your target. As Grant noted: “It enables militaries to do things they really couldn’t do before, and that they’re really only beginning to explore”

The democratization effect is already visible. Iran has been supplying Russia’s Shahid drone program, providing launchers, storage, manufacturing, and training. Russia has been deploying these at scale in Ukraine, and the data from that theatre has been feeding directly back into counter-drone development. Hamas deployed simple, low-cost variants to devastating effect. Hezbollah has been actively training with drone swarms. What was once the exclusive domain of air forces with billion-dollar assets has become accessible at the level of proxy groups and sub-state actors.

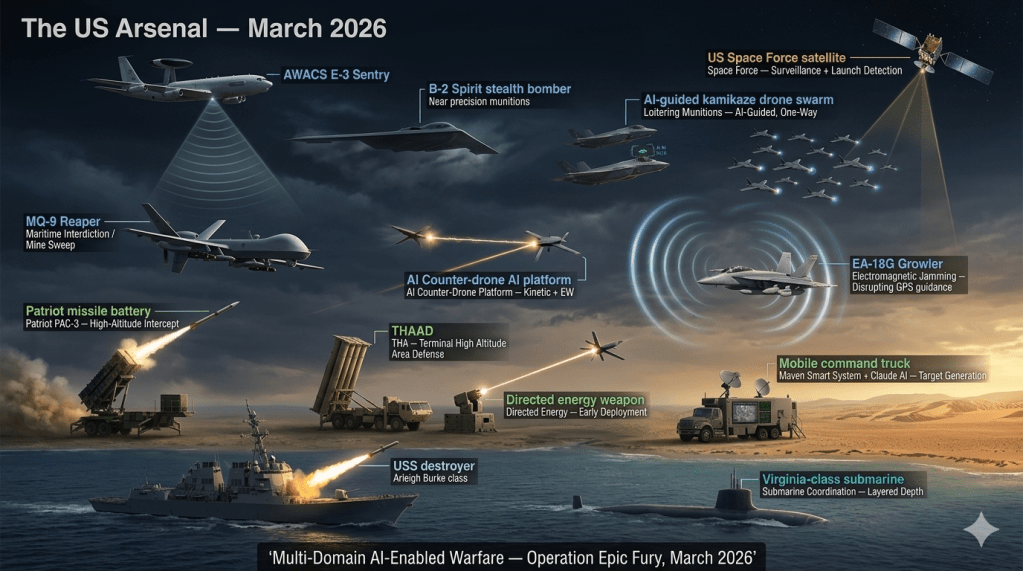

The US countermeasures stack is layered:

- Patriot and THAAD — high-altitude defence, well understood, expensive per intercept

- AI-enabled counter-drone platforms — tracking swarms against a “single integrated air picture,” with both kinetic and electronic warfare capability on the same platform

- Directed energy weapons — in early operational deployment, capability still maturing

- Electromagnetic jamming — disrupting drone communications and GPS guidance

- AWACS area coverage — now fully deployed in the Gulf

- MQ-9 drone squadrons — operating in the Gulf in mine-sweeping and maritime interdiction roles, capable of warheads ranging 2,000 to 8,000 pounds

- Submarine coordination — providing layered countermeasure depth

The countermeasures are impressive. But as Grant noted, the defenders still don’t have a perfect picture: “Sometimes it’s the defender’s problem — you don’t have a drone picture in the area.” Interception requires multiple systems operating simultaneously to achieve high success rates. That’s a costly response to a cheap attack.

“This is their chance to really step up and show what they’re capable of doing — and they have.” — Dr. Rebecca Grant, VP Lexington Institute, on the US Space Force

The Space Force, often treated as a punchline since its founding in 2019, is now running live combat operations: surveillance satellites providing real-time battlefield imagery, drone tracking infrastructure, and launch detection. March 2026 has been described as its first genuine operational test at scale.

On the supply side: the Pentagon announced on March 9th that drone manufacturers had agreed to quadruple production of certain drone types, with full capacity online within 30 months — confirmed on Truth Social March 9, 2026. The supply chain is accelerating in real time on both sides.

The Kill Chain Now Runs Through Server Rooms

Here’s something that wasn’t obvious to me until I started mapping the infrastructure: the war isn’t just fought with drones and missiles. Part of it is fought over — and inside — data centres.

In 2025 alone, Amazon committed $100 billion to AI infrastructure, Google $85 billion, Meta $72 billion — investments the Atlantic Council described as “dwarfing the national security budgets of the vast majority of states.” Nvidia’s market cap hit $5 trillion — technically larger than Germany’s entire economy (Atlantic Council, 2026). These figures matter for warfare because the same physical infrastructure that runs commercial AI runs military targeting systems. It’s the same GPUs, the same fibre optic cables, the same cooling systems and power grids.

The Middle East became the focal point of this buildout. Microsoft pledged $80 billion to Saudi Arabia and $15 billion to the UAE by 2029. Oracle committed $14 billion over ten years. The region’s data centre capacity is projected to triple from 1GW in 2025 to 3.3GW by 2030 (CNBC, March 2026). The world’s most significant AI compute buildout is happening in the world’s most geopolitically volatile neighborhood.

Iran understood the dependency. Retaliatory strikes hit AWS cloud facilities in the UAE and Bahrain, taking down banking, payments, and enterprise services across the region (CNBC, March 2026). Governments are urgently reclassifying commercial data centre’s as critical national security infrastructure alongside power plants and oil fields (TechPolicy.Press, 2026). The legal argument is already being tested: infrastructure that supports military operations becomes a legitimate military target regardless of civilian ownership. “Dual use” creates significant legal ambiguity — and no binding international law currently resolves it (Cornell Law Review, August 2025).

“Data centers may now be considered legitimate targets for attack in modern armed conflicts.” — Center for Strategic and International Studies, via TechPolicy.Press, 2026

The semiconductor dependency underneath all of this adds another layer of fragility. Qatar produces over a third of the world’s helium supply — a gas critical to the lithography process used to manufacture AI processors (CNBC, March 10, 2026). TSMC’s fabrication facilities sit 110 miles from mainland China — and TSMC is actively expanding to Japan, the US, and Europe specifically to hedge against Taiwan conflict risk (Digitimes, March 2, 2026). A conflict that destabilises Qatar or triggers a Taiwan flashpoint doesn’t just disrupt global shipping — it disrupts the supply chain for the exact chips running the targeting systems. The Modern Enquirer (2026) published direct analysis arguing the US-Iran conflict is partly a function of the AI race with China: Iran’s regional position was seen as threatening US ability to secure AI infrastructure and Middle Eastern influence.

The war for AI compute dominance and the war in the Middle East are now sharing supply chain vulnerabilities. That’s a new kind of interdependency.

The Companies That Drew Lines — Then Erased Them

This section is just the documented record. You can read what you want into it.

January 2024: OpenAI deleted explicit prohibitions on “military and warfare” from its usage policies (TechCrunch, January 12, 2024).

February 2025: Google revised its internal AI ethics guidelines, dropping a long-standing pledge not to develop AI for weapons or surveillance.

June 2025: OpenAI secured a $200M ceiling contract with the Department of Defense for “OpenAI for Government” (TechCrunch, June 17, 2025).

2026: OpenAI formally partnered with the Department of War — the Pentagon’s name under the current administration — deploying ChatGPT on GenAI.mil, the secure enterprise platform serving 3 million civilian and military personnel. The partnership includes a narrow carve-out: the system “will not independently direct autonomous weapons where human control is required” (OpenAI, 2026).

2026: Google deployed eight Gemini AI agents inside Pentagon operations.

February 25, 2026: The Pentagon issued Anthropic a formal “best and final offer”: agree to unrestricted military access to Claude, or be designated a national security supply-chain risk. Anthropic’s stated redlines were no domestic mass surveillance and no fully autonomous weapons (The Defense News, 2026).

March 2026: Nearly 1,000 employees from Google and OpenAI jointly signed an open letter calling for clear limits on military AI — the first significant cross-company employee action (TechRadar, March 2026). Caitlin Kalinowski, who had led hardware and robotics at OpenAI since November 2024, resigned over the Pentagon deal, citing “domestic surveillance without judicial oversight” and “lethal autonomy without human authorization” (TechRadar, March 2026).

Context for scale: Google walked away from Project Maven in 2019 after four thousand employees protested. By 2026, they had eight Gemini agents deployed in the Pentagon, and the protest letter this time numbered fewer than a quarter of that. The gap between those two moments is seven years.

If Russia, China, and Iran Combined Their Technology — What Would That Look Like?

This is the scenario I keep circling back to. Not as a geopolitical prediction. Just as a technical thought experiment grounded in what’s already happening.

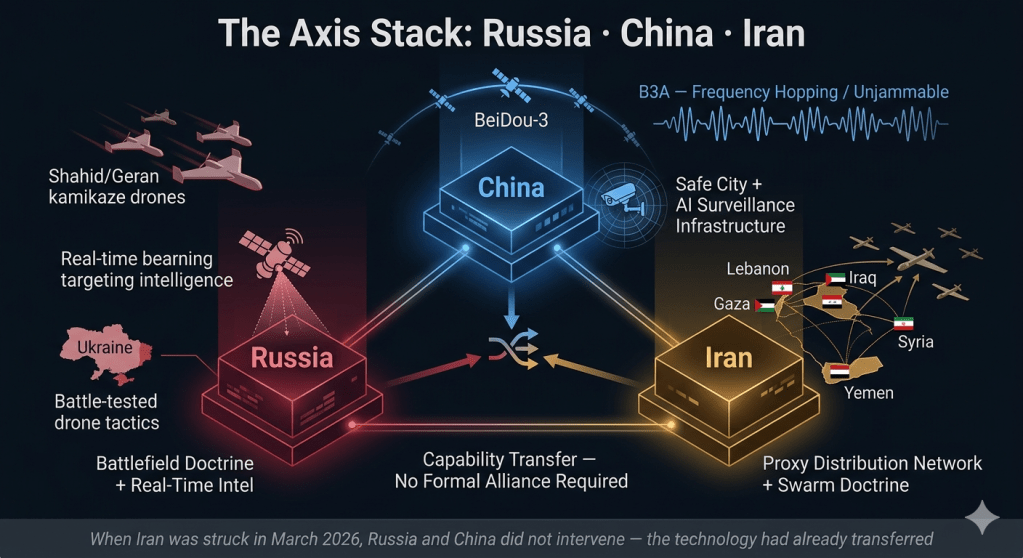

Each actor brings something distinct to the table.

Russia brings battlefield-tested drone doctrine. Three years of industrial-scale drone warfare in Ukraine produced something no war game could replicate: real-world tactics for swarm attacks, counter-jamming, electronic warfare evasion, and target identification at speed. In March 2026, CNN reported — based on Western intelligence sources — that Russia is actively sharing those drone tactics with Iran, including specific UAS strategies developed in Ukraine being adapted against US and Gulf nation targets. Russia is also providing real-time targeting intelligence identifying the positions of American warships and aircraft in the Middle East (Washington Post, March 6, 2026; confirmed by multiple US officials to RFE/RL). That’s not future speculation. It’s happening now.

China brings navigation that US electronic warfare can’t jam. This is the technical detail that stopped me. Iran confirmed in early 2026 that it was transitioning drone guidance systems from GPS to China’s BeiDou-3 navigation satellite network. BeiDou’s military B3A signal uses frequency hopping and Navigation Message Authentication specifically designed to resist jamming and spoofing — the exact countermeasures the US and Israel have been deploying against Iranian drones. Al Jazeera (March 11, 2026) and bne IntelliNews both reported this shift directly. If accurate, it means a significant pillar of the current US counter-drone advantage — electromagnetic jamming of GPS-guided drones — becomes progressively less effective as BeiDou adoption spreads through Iran’s fleet.

China also brings AI surveillance infrastructure that has military dual-use potential at scale: the same facial recognition, geolocation, and network integration systems deployed in “Safe City” packages across 80+ countries can be repurposed for battlefield population mapping and target identification. The civilian and military functions are running on the same stack.

Iran brings the proxy network and the doctrine. Russia and China have technology and resources. Iran has geographic reach — a proven network of proxy forces across Lebanon, Iraq, Yemen, Syria, and Gaza, each now receiving drone training and swarm tactics. That distribution layer means the technology doesn’t stay contained to the Iranian military. It propagates outward through proxy actors, each of whom operate with a degree of deniability that makes conventional deterrence harder to apply.

What the combined picture starts to look like:

- Drone swarms guided by unjammable BeiDou navigation, using tactics battle-tested in Ukraine

- Real-time Russian satellite intelligence identifying US asset positions to feed targeting systems

- AI-enabled population surveillance covering partner nations, dual-use for battlefield awareness

- A proxy distribution network that can deploy these capabilities across multiple theatres simultaneously

- An emerging parallel financial backbone — BRICS cross-border settlement, yuan-ruble bilateral trade — that reduces vulnerability to Western sanctions pressure

“Russia and China have transitioned from diplomatic allies to ‘technological anchors’ for Iran — providing S-400 defenses, Su-35 fighters, and BeiDou-3 navigation to negate Western stealth and jamming capabilities.” — Special Eurasia, March 1, 2026

But here’s the complication. When US and Israeli strikes actually landed on Iran in early March 2026, Russia and China did not come to Iran’s defense. Not militarily. Not even rhetorically with any force. Al Jazeera (March 5, 2026) and CNBC (March 2, 2026) both reported the same thing: Iran’s allies kept their distance. The Iran-Russia Comprehensive Strategic Partnership signed in January 2025 has no mutual defense clause. The cooperation is real — but it appears to be transactional, not binding.

Which raises the actual question worth sitting with: does that distinction matter if the technology transfer has already happened? You don’t need a formal alliance to have already handed someone unjammable navigation, battlefield-tested tactics, and real-time targeting intelligence. The capability is there whether or not the treaty obligation is.

The technology cooperation is documented. The limits of the political commitment are also documented. The question of what you get when you combine the two — capable technology flowing through a fragile alliance — doesn’t have a clean answer yet.

The Signal Nobody’s Reading

I’m not going to tell you where this ends up. I genuinely don’t know — and I’m suspicious of anyone who claims certainty.

But here are the questions I keep turning over.

On the speed problem. When AI generates 1,000 targets before lunch and a human has thirty seconds per recommendation, what is the functional difference between human oversight and human authorization? The AP noted in March 2026 that AI “doesn’t lessen — it increases — the need for human judgment” precisely because commanders face more recommendations, faster (AP/Yahoo News, March 2026). The system is designed to be efficient. Efficiency and deliberation are in tension. How does institutional decision-making scale with machine throughput?

On the governance gap. There is currently no binding international law governing AI-assisted military targeting. The Tallinn Manual, the closest thing to a framework for cyber and AI warfare, is not legally enforceable (Cornell Law Review, August 2025). Congress has no oversight mechanism. The CFR noted that 2026 is the first year AI governance has entered “a truly global phase” — while “governance mechanisms remain far behind deployment reality” (CFR, 2026). Military AI is already at industrial scale. The governance conversation is still in draft.

On the surveillance layer spreading in parallel. China is rolling out what CNN called “authoritarian AI” — robotic police, real-time population monitoring, AI-driven censorship — domestically, and exporting the model globally (CNN, February 3, 2026). Carnegie Endowment’s March 2026 analysis found AI is now “integrated at multiple layers” of China’s censorship system — “using pattern recognition, anomaly detection, and behavioral scoring to flag users as suspicious” — and that this model is being actively exported (Carnegie Endowment, March 2026). Iran is already a documented deployment: when protests broke out in early 2026, the crackdown ran on Chinese-supplied surveillance infrastructure (Probe International, March 12, 2026). What does a world look like where that model has spread across dozens more countries — each running on the same underlying stack?

On infrastructure concentration. Amazon $100B. Google $85B. Meta $72B. Nvidia at $5 trillion (Atlantic Council, 2026). These companies are now larger geopolitical actors than most sovereign states. The Middle East AI infrastructure buildout represents an unprecedented concentration of compute in a geopolitically unstable region. Every enabling technology in history that achieved strategic infrastructure status — rail, oil, cables, nuclear — restructured power relations in ways that outlasted any particular conflict it enabled. What does it mean when that infrastructure is AI compute, and it’s owned by three or four corporations?

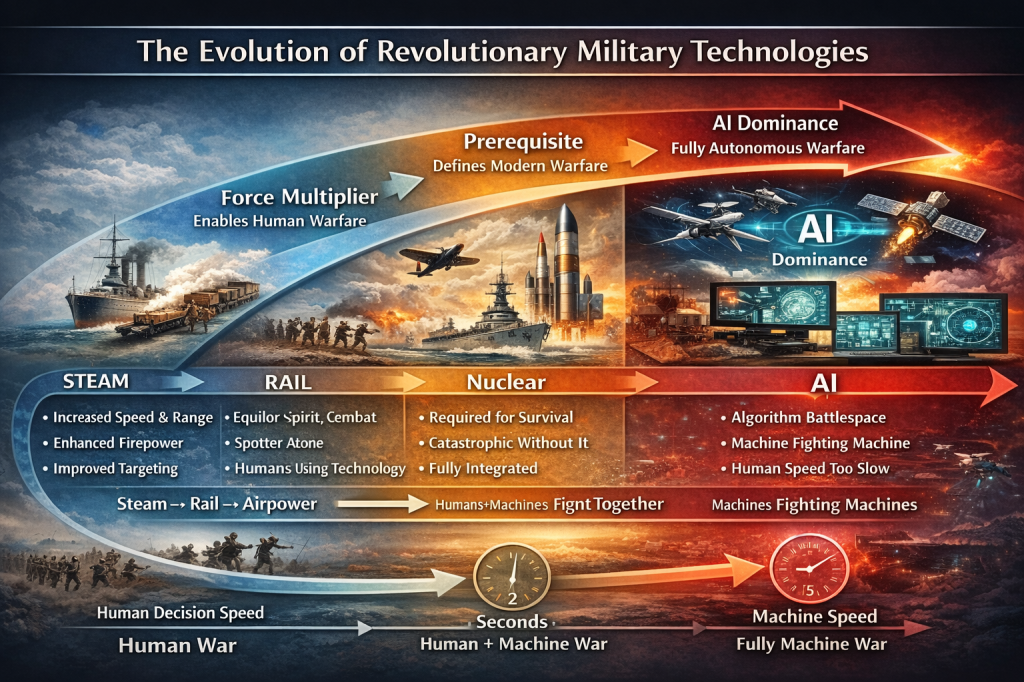

On the arc of this technology. Every major enabling technology in warfare follows a pattern: force multiplier → prerequisite → primary terrain. Steam, rail, airpower, nuclear — each one rewrote the rules of who could threaten whom and how fast. AI is following that arc. We’re somewhere between force multiplier and prerequisite right now. The question isn’t whether that changes the nature of conflict. It already has. The question is what comes after the upgrade finishes installing.

Is This the Beginning of World War III?

I’ve been trying to write this section for three days. I keep deleting it.

So let me just ask the question directly: is this World War III?

The honest answer from the people who study this for a living is: not yet — but the conditions have never been closer. The CFR’s 2026 conflict risk assessment places Iran, Ukraine, and a Taiwan cross-strait crisis all at Tier I — the highest impact category — simultaneously (CFR, 2026). Foreign Policy ran a piece on March 12, 2026 titled “The Irresistible Urge to Invoke World War III” — and their conclusion wasn’t that the comparison was wrong, it was that the conditions driving it are genuinely different from anything since the Cold War.

How long does this last?

RAND’s analysts said in March 2026 that “historical diplomatic off-ramps appear to be nonexistent” because the US called for Iran’s unconditional surrender — making it “politically difficult for Iran’s leaders to come to the table without appearing to capitulate” (RAND, March 2026). Meanwhile, the Al Jazeera “12 days” analysis showed that what was originally planned as a short, sharp campaign — modelled on the June 2025 Twelve-Day War blueprint — has already trapped both the US and Israel in something longer and less contained (Al Jazeera, March 11, 2026).

There are currently four possible trajectories, as best as I can map them:

Scenario 1 — Negotiated ceasefire (most likely near-term). Iran’s President Pezeshkian has publicly set terms: recognition of Iran’s legitimate rights, reparations, and firm international guarantees against future strikes (Al Jazeera, March 12, 2026). Oman — which brokered the original 2015 nuclear channel — says “off-ramps are available” (Al Jazeera, March 3, 2026). Gulf states have the economic motive to end this fast. A ceasefire is not peace — but it’s the most available exit. This is the scenario where the war ends in weeks, not years, with both sides claiming enough to save face.

Scenario 2 — Extended war of attrition. If neither side blinks, you get a grinding conflict: sustained US air campaigns, Iranian proxy activation across multiple theatres, Strait of Hormuz leverage, and steady degradation of both sides’ AI and military infrastructure. The semiconductor supply chain starts to crack. Energy prices spike. The global economy absorbs it badly. This war doesn’t go nuclear or global — it just doesn’t end. Measured in months or years.

Scenario 3 — Escalation through proxy or miscalculation. The Russia-China-Iran technology transfers described in this article don’t require a formal alliance to produce a catastrophic outcome. One unjammable BeiDou-guided drone that sinks a US destroyer. One AI targeting error that strikes a Russian military adviser embedded with Iranian forces. One Hezbollah rocket barrage that kills 200 civilians and triggers a Lebanese ground war. Any of these could pull new actors in — not because anyone decided to escalate, but because the systems are too fast and the humans reviewing them are too stretched. This is the scenario that becomes the textbook definition of how great power wars accidentally start.

Scenario 4 — Forced de-escalation and eventual normalization. This is the one I keep hoping for. It’s not impossible. Both the US and Iran have strong economic motivations for an off-ramp. A weakened Iran, paradoxically, may be more negotiable than a nuclear-capable one. Trump has publicly said the war will end “soon.” Gulf states that have spent years quietly normalizing with Tehran have every reason to push for a settlement that reopens trade and stabilizes energy markets. And historically, even the most entrenched conflicts have ended when the cost of continuing exceeded the cost of stopping — sometimes within days of that calculation shifting.

“The risk of large-scale conflict is at its highest since the Cold War — but worldwide warfare is not inevitable. The greater risk is accidental escalation, not deliberate decision.” — CFR Conflict Risk Assessment, 2026

I keep that framing close. Accidental escalation, not deliberate decision. That’s the thing about AI in warfare that connects all the threads in this article: the systems are designed to go fast. Humans are not. The gap between those two speeds is where accidents live.

I don’t know which scenario we’re heading into. I don’t think anyone does. But the decisions being made right now — by people using AI tools none of us voted to deploy this way, at a speed no oversight framework was built for — are part of what determines it.

That’s not a comfortable place to end. But it’s the honest one.

Leave a comment